The 2012 Institute of Medicine (IOM) report, “Health IT and Patient Safety: Building Safer Systems for Better Care,” concluded that while health IT has massive potential to improve care, its current, rapid, and often uncoordinated implementation poses significant risks to patient safety. The report called for a shift to a “sociotechnical” approach, emphasizing that safety issues are often due to system design and user interaction, not just technical failure. It notably recommended against immediate, heavy-handed regulation, urging a focus on voluntary reporting systems, improved usability, and a “culture of safety.”

Key Takeaways and Recommendations

Safety Risks: The report highlighted that, although Electronic Health Records (EHRs) and other tools can reduce errors (e.g., in prescribing), they can also introduce new risks such as data entry errors, alert fatigue, and system downtime.

10 Recommendations: The IOM provided 10 recommendations, which focused on improving health IT safety through better design, implementation, and the creation of a non-punitive reporting system for errors.

Focus on Usability: A key finding was that user-centered design is crucial, as poor usability can lead to hazardous situations for patients.

Need for Actionable Data: The report urged that data on IT-related safety events be collected, shared, and used to improve systems, not to punish providers.

A “Sociotechnical” Approach: The report argued that, rather than solely focusing on the software itself, safety must be addressed through a “sociotechnical” approach, understanding that technology, people, and processes are interdependent.

The report’s findings were intended to guide the Office of the National Coordinator for Health IT (ONC) and other regulatory bodies in developing policies to maximize the benefits of technology while minimizing its, at times, dangerous, risks.

Following the IOM report, the ONC integrated mandatory Safety-Enhanced Design (SED) and User-Centered Design (UCD) requirements into its certification programs to mitigate technology-induced safety risks. These standards require developers to conduct summative usability testing on critical functions, such as CPOE and medication reconciliation, and publicly report results via the Certified Health IT Product List (CHPL).

These updates moved beyond voluntary standards to mandatory Conditions and Maintenance of Certification, requiring developers to provide explicit assurances that their software meets all technical and safety criteria.

Usability and Safety Certification Requirements#

In response to IOM’s call for safer systems, ONC implemented several key requirements for Certified Health IT Modules:

User-Centered Design (UCD): Vendors must attest to using a formal UCD process for critical safety functions like drug-drug interaction checks and computerized provider order entry (CPOE).

Formal Usability Testing: Developers must conduct and publicly report the results of summative usability testing, including the number and clinical backgrounds of participants.

Surveillance and Direct Review: ONC established a framework for “in-the-field” surveillance and Direct Review, allowing them to investigate and terminate the certification of products that fail to maintain safety standards in real-world use.

\[1, 5, 6, 7\]

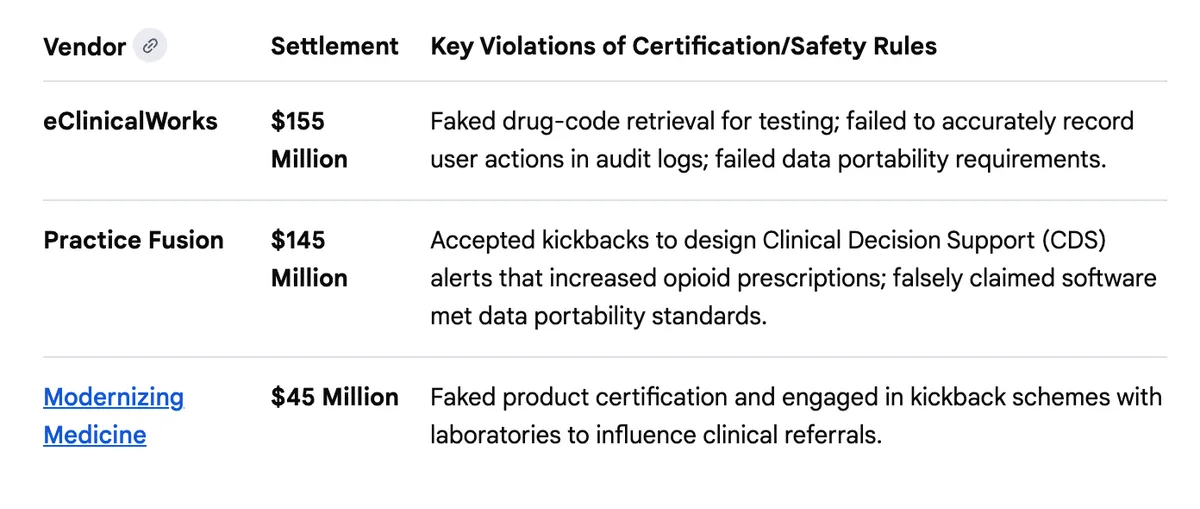

Accountability for Fraud: DOJ Enforcement#

The Department of Justice (DOJ) used these specific certification requirements, which involve formal attestations to the government, to hold vendors accountable under the False Claims Act (FCA). By falsely claiming their software met ONC standards, these companies caused healthcare providers to submit false claims for federal incentive payments.

All of these parts are important: Health IT systems are now the backbone of how health care is administered in the United States. We have regulatory infrastructure for food, for medications, for transportation, and for water. The DOJ pursuit of companies that violated the regulations has sent a firm message: safety is important, and the certification requirements are important elements of preserving the safety of 330 million people.

But now, the federal government is proposing to remove this backbone and in so doing, puts us all at risk.

In January 2026, the Office of the National Coordinator for Health IT (ONC) proposed HTI-5 - a rule that would remove or relax several health IT certification requirements, including safety standards that have been in place since the IOM’s 2011 report on health IT and patient harm.

The comment period closed February 27. 298 unique public comments were submitted. I read them all, and then I built a tool to help everyone else read them too.

This post is about what those comments say, how I analyzed them, and why I think this kind of transparent analysis matters.

Why This Rule Matters#

If you work in health care, health IT touches almost everything you do. The certification requirements ONC is proposing to remove aren’t abstractions, they’re the rules that require your EHR to have audit logs, safety-enhanced design, quality management systems, and clinical decision support transparency.

The stated goal is burden reduction. The concern, raised loudly by the comment record, is that removing these requirements doesn’t reduce burden; it removes the floor.

What the Comments Say#

The headline: strong opposition to removing safety standards#

Of the 298 comments, 127 oppose the proposed deregulatory actions (89 strongly), while 70 support them. 72 are mixed or neutral, and 29 are unclear.

Who said what#

The opposition isn’t coming from one corner. Professional associations (53 comments), advocacy groups (29), individual clinicians (20), health systems (23), and patient safety organizations all weighed in against removing safety criteria.

Support came primarily from health IT companies and EHR developers: organizations that bear the compliance costs. That’s a legitimate perspective, but it’s important to see who is on which side.

The themes#

The most-discussed topics, in order:

FHIR standards (238 mentions). Broad support for modernizing around FHIR, but concern about removing C-CDA before FHIR is ready for all use cases

Burden reduction (218). Everyone wants less burden, but there’s deep disagreement about whether removing certification criteria is the way to get it

Patient safety (136). The single most contested issue: should safety-enhanced design, audit logs, and quality management be required or voluntary?

AI regulation (109). Strong calls for CDS transparency requirements to cover AI, not be removed

Data privacy (105). Concerns that relaxing standards creates new privacy risks

Coordinated campaigns#

22.8% of comments came from organized campaigns — legal networks, vendor blocs, clinician coalitions, and government opposition groups. This isn’t unusual in federal rulemaking, but it’s worth knowing. The analysis identifies five distinct campaigns and lets you see which comments are independent versus coordinated.

How I Built It#

The analysis site is at hti5.org. It’s open source. Every piece of the pipeline is published.

The pipeline#

Data collection: All 305 submissions downloaded from regulations.gov (298 unique after deduplication)

AI-assisted summarization: Each comment was summarized, classified by position, tagged with themes, and categorized by organization type

Quality assessment: Every comment scored on understanding of the proposed rule (0–5) and whether its recommendations are a “logical outgrowth” - the legal standard for valid rulemaking comments

Provision and posture analysis: AI-assisted identification of which specific NPRM provision each comment addresses, with a recommended agency response

Why transparency matters here#

When you use AI to analyze public comments on a federal rule, credibility is everything. If the analysis looks biased, it’s worse than no analysis.

So I published everything:

The exact prompts used for assessment

The scoring methodology and rubric

Every script used to generate scores

The full NPRM text used as ground truth

A dedicated Process page explaining the entire pipeline

No cherry-picking. No filtering. No steering toward conclusions. All 298 comments analyzed identically. If you think the analysis is wrong, you can check it, and you can tell me (by clicking the “comments” link.

Iterating in the open#

The analysis wasn’t perfect on the first pass. The initial version used keyword-counting heuristics to identify which rule provision a comment addressed. Spot-checking revealed that 55% of comments were lumped into “multiple provisions” with generic boilerplate rationales. I documented the problem, rebuilt the analysis with AI-assisted comment-level review, and published the fix.

This is the kind of correction that usually happens behind closed doors. I’d rather do it in the open.

What ONC Should Hear#

Based on the comment record, seven policy recommendations emerged. The most important:

Retain all safety certification requirements. Modernize, don’t remove. The patient safety community — ECRI, the Anesthesia Patient Safety Foundation, the AMA, and individual clinicians — unanimously opposed removing safety criteria established in response to documented patient harm. As I put it in my comments: “We do not remove seatbelt requirements because cars have seatbelts. The requirement is what made them so.”

The other recommendations cover phasing the C-CDA transition, strengthening CDS transparency for AI systems, protecting USCDI data classes for vulnerable populations, and improving information blocking enforcement.

Why I Built This#

Federal rulemaking comments are public. They’re also nearly impossible to read. Regulations.gov gives you a list of PDFs. If you want to understand what 298 commenters actually said in aggregate, with themes and patterns - you’re on your own.

I think that’s a problem. Public comments are supposed to inform policy. If nobody can read them, they can’t do their job.

So I built a tool that makes them readable, searchable, and analyzable. It’s not perfect, but it’s a start. And because every piece is open source, anyone can improve it.

Explore the analysis: hti5.org

Source: github.com/jacobmr/hti5